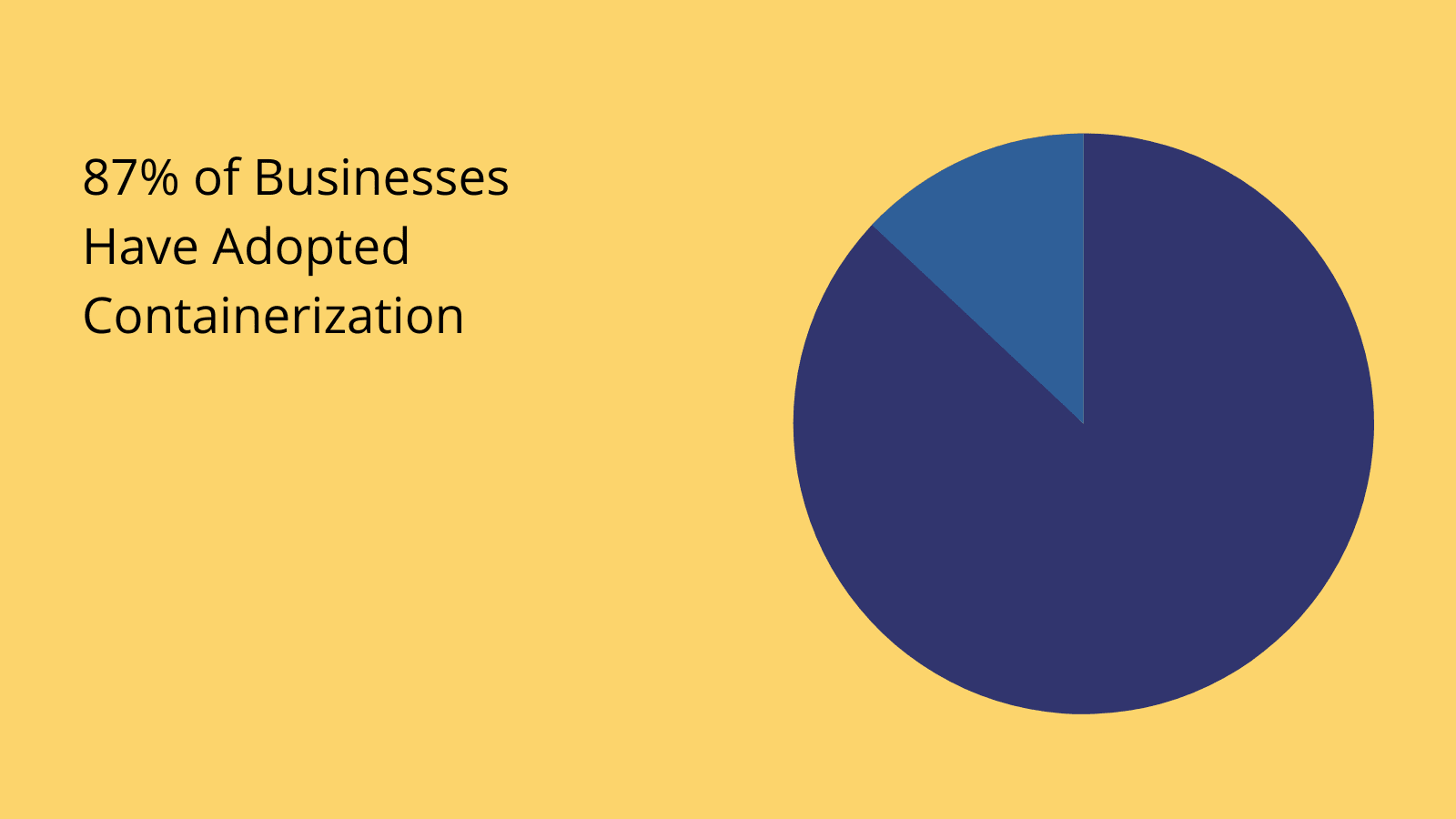

Containerization is one of the decades’ biggest shifts in the way businesses manage their internal IT - with huge implications for how we develop software, manage existing tools, handle business data, and much more.

So much so, that containerization has become a top priority for IT leaders in all industries.

Today, we’re checking out everything you need to know about working with containers - from what they are and what they achieve - to the challenges you might encounter and the specific tools you can use for successful implementation.

By the end of this guide, you’ll have a complete working understanding of how containerization works - and some of the specific strategies you can employ to leverage it within your own IT operations efforts.

Let’s start with the basics.

What is containerization?

Containerization is an approach to hosting software where developers bundle all of the files needed to run a solution into a single package. This includes the source code, runtime files, libraries, frameworks, libraries, and any other dependencies.

Basically, the idea is that we can treat all of the elements that make up an application as a single unit - for the purposes of hosting and deployment.

The goal is to achieve a high degree of abstraction.

In other words, we’re ultimately trying to make it easier to run our applications - in just about any kind of environment.

Since everything that’s needed to run an application is included in its container, we’re able to eliminate issues that might stem from incompatibility with a local operating system. The container acts as a kind of bubble around the solution.

In this way, it’s functionally independent of the environment or end-user’s local operating system.

(BMC)

But - that’s just a very high-level definition. Things are obviously a lot more complex in the nitty-gritty of the real world.

So…

How does containerization work?

Really, there are two basic tasks to account for here:

- Packaging the resources we need into a container.

- Running applications in containers - no matter the environment.

Both elements require specific containerization tools.

The first element is relatively straightforward. All of the files needed to run an application are bundled into a single container file, which is then converted into a container image.

This becomes a real container at runtime.

Making containerized code runnable requires a runtime engine.

This allows the containerized solution to share the host machine’s operating system kernel - negating the need for a dedicated OS for each container - and allowing containerized tools to be environment-agnostic and portable.

We’ll check out some specific examples of containerization platforms toward the end of this guide.

Benefits of containerization

But first, let’s explore why containerized apps are such a hot issue. In other words, what benefits can we achieve by moving to a container-based model?

Here are the top selling points.

Portability

Portability is the extent to which we’re able to easily move solutions from one environment to another. Containerization helps in a couple of key ways here.

With a high degree of abstraction, containerized software isn’t dependent on a particular kind of host - so it can effectively run anywhere. Since containerized software can run in any environment, it’s much easier to deploy and host it on new infrastructure or different locations.

This is further enhanced by the fact that we can treat the entire container as a single unit during migrations, helping us to minimize service disruptions.

(DataDog)

Agility

Containerization is ideally suited to Agile processes, within development, DevOps, and other internal IT processes. Essentially, containerization empowers IT teams to create, test, and deploy solutions - with greatly reduced lead times and resource burdens.

For example, containerization allows us to use common, universal development tools across all of our teams, machines, and environments.

In turn, this can facilitate expedited developments, improved collaboration, and higher levels of agility within our IT team.

Fault isolation

Fault isolation means that individual solutions are separated from one another - so, if something goes wrong with one, the impact on others is minimized. This is tightly linked to the microservices architecture.

Similarly, if the underlying infrastructure or computer system is affected by an issue, then a containerized solution can quickly be moved to a different host while the DevOps or network team sorts out the problem at hand.

Therefore, containerization can offer a hugely improved degree of reliability across IT services.

Security

Containerization also tangible improves security. On the one hand, isolation minimizes the impact of security breaches when they do occur. For instance, in the case of a deliberate attack, exposure is limited to the affected containers.

We can also implement a high degree of granularity in our access control, authentication, and authorization policies within containerized ecosystems.

Containerization also enables us to isolate any nodes that have been compromised, more or less immediately.

Efficiency

Efficiency is a huge impetus towards containerization. This actually plays out at two separate levels.

First, there’s the speed and ease with which we’re able to develop and deploy new solutions - helping us to make efficiency savings within our IT team’s daily workflows.

Second, there’s the wider impact on organizational efficiency. Containerization allows us to get user-facing applications online faster, drastically cutting the time to value of custom solutions.

Scalability

Finally, we have scalability. This is how easily we’re able to expand our capabilities - either by adding new functionality or increasing the resources dedicated to existing functions.

In the context of containerization, we’re really talking about two things here.

First, there’s horizontal scaling - ie our ability to add entirely new containers. On the other hand, there’s vertical scaling - which relates to adding additional infrastructure resources to existing containers.

Containerization challenges

Of course, nothing is ever 100% plain sailing. Indeed, when it comes to containerization, there are a number of potential challenges that we’ll need to account for within application development.

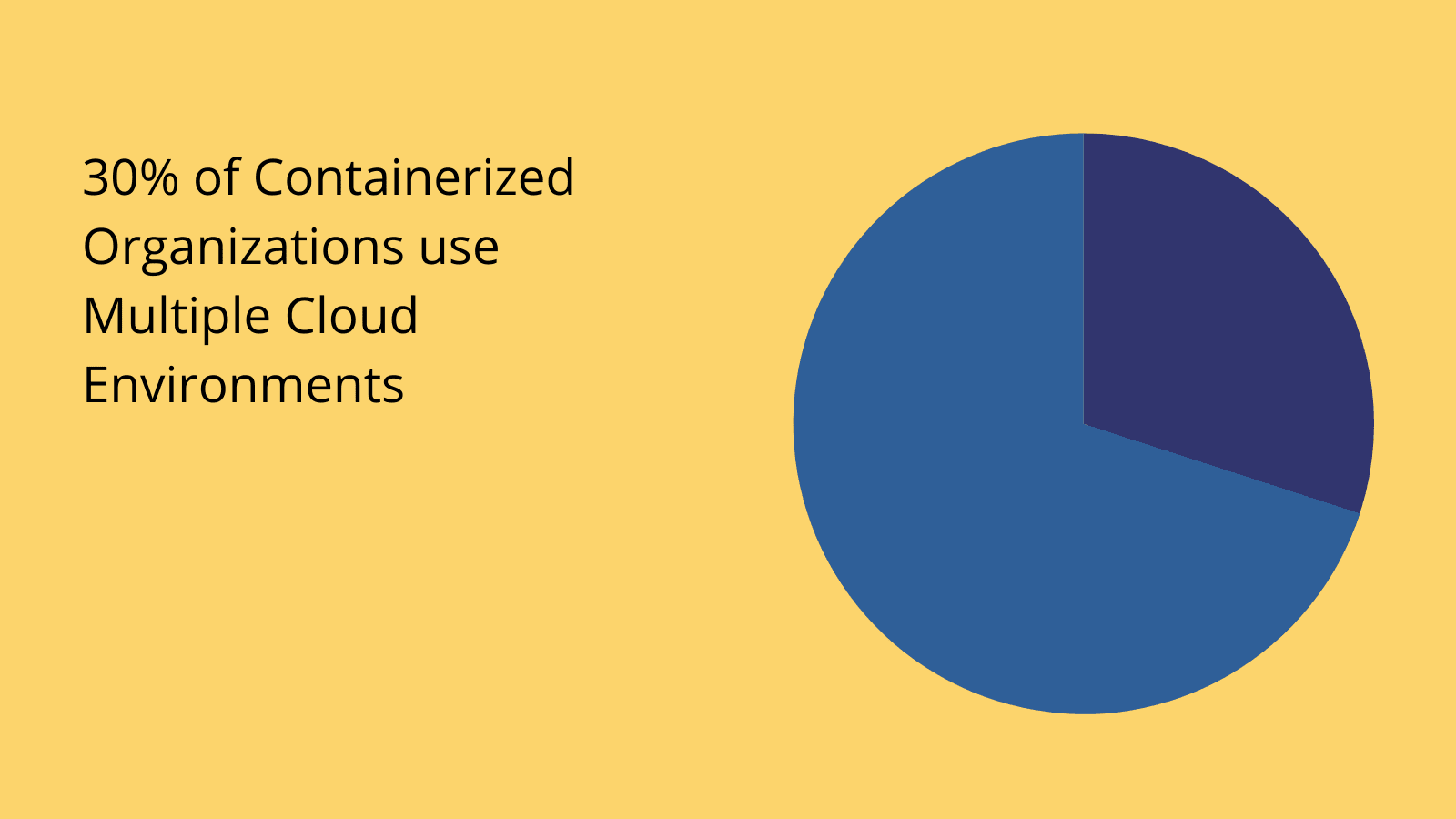

Chief among these is the fact that working with large numbers of separate, containerized solutions is inevitably going to make things difficult to monitor, manage, and maintain - at least manually.

This is particularly challenging when you consider the fact that containers will often move across different servers and tenants. In turn, this makes it tricky to keep individual tools within the wider ecosystem working together effectively.

The key here is what’s known as container orchestration.

Orchestration is the automation of container deployment, management, monitoring, maintenance, and administration tasks.

So, we can tell container orchestration tools where we want our software to be deployed at any given moment, and the platform will essentially take care of everything for us. We’ll see some examples of these kinds of tools a little bit later.

Join 300,000 teams running operations on Budibase

Get started for freeContainers vs virtual machines

This is an important question - as well as one where a lot of people get confused. So, it’s worth clarifying the exact differences between containers and virtual machines - as well as where they overlap.

A virtual machine (VM) is an environment that effectively behaves like a real-life computer. So, it has its own CPU, memory, networking, and storage, built on physical infrastructure.

Like containerization, VMs also offer a high degree of isolation, enabling applications to run in different computing environments.

However, VMs will typically have a much larger storage footprint and incur greater computing resources - in part because they’re bundled with their own operating systems - whereas containerized solutions use shared operating systems.

This can also come with a tradeoff in terms of performance - although it does make VMs more suitable to certain, resource-intensive tasks compared to containers.

Virtual machines are largely suited to traditional, monolithic IT infrastructure models, while containerization has emerged to meet the needs of performant, distributed, cloud-based IT ops.

When is containerization used?

Next, we can start to think about some of the more specific situations where containerization is useful - and the value that we can derive in the context of different kinds of processes.

Development

First, we have the software development process. One of the key benefits of containerization here is the relative ease of creating separate development, testing, and production environments - on-premise or in the cloud.

In turn, this makes it much faster and easier to move application code into new environments - and work on new features without causing unnecessary service interruptions.

On top of this, containerization makes it considerably easier to carry out a range of other development tasks, including implementing specific security protocols, automating deployments, and managing software across its entire lifecycle.

Self-hosting existing tools and legacy modernization

However, containerization isn’t necessarily reserved for custom solutions. In fact, containerization is also one of the most popular strategies for legacy app modernization.

In fact, this is often a viable solution to take advantage of the benefits of containerization, without incurring the same costs as we might with full-on refactoring or redevelopment. This is sometimes referred to as a lift and shift transformation.

We can also apply to same logic to any other software packages that we can self-host.

Distributed data management

Finally, we have database containerization. As the name suggests, this means moving our data and DBMSs to container-based solutions, in exactly the same way we would with applications.

This is a somewhat more novel approach - with many of the major database vendors only recently adding official support.

As data ops become increasingly automated, centralized, and cloud-based, we’re also seeing a huge movement towards containerization of data assets.

Check out our ultimate guide to IT transformation to see how else day-to-day operations are changing for technical teams.

Containerization tools

Finally, let’s check out some of the big players in the containerization tools market. There are two key platforms that dominate the landscape here. There are plenty of alternatives out there, but these are the benchmarks.

Let’s take a look at each in turn.

Docker

Docker is the tool that first brought containerization to the fore. Launched in 2013, it’s an open-source platform that uses OS-level virtualization to deploy and manage software containers.

It provides an easy-to-use, scalable solution for creating Linux containers, for building, testing, and running distributed applications.

Using the Docker engine as a client-server application, alongside a series of APIs and a dedicated CLI, developers around the world are able to take advantage of containerization in order to ship solutions to the cloud.

Kubernetes

Kubernetes is the best-known platform for managing, automating, and scaling the deployment of containerized applications. Whereas Docker provides its own runtime environment, Kubernetes allows developers to handle multiple engines simultaneously.

The idea is to automate many of the processes that are involved in container management and orchestration. A huge part of this is clustering hosts into logical groupings, and moving containers between them using a visual automation interface.

This makes Kubernetes an ideal platform for hosting and deploying scalable distributed applications in the cloud.