Databases are not new. Having ways to be able to store data, query it, and serve it back to our users has been a key focus of web application development for decades. But, that doesn’t mean things have stood still.

Today, we’re checking out the future of databases - with 8 data management trends and 11 real-world examples.

Relational databases that were developed in the 70s still provide the backbone of most modern IT. However, more and more businesses are turning to more novel solutions.

Companies have worked to be able to provide us with new features. This means faster ways to access our data - new approaches representing data that are more like the real world, or more like the realm of knowledge we're trying to model.

Today, we want to talk about some of the database trends that are driving this change. Some of the companies that are leading the charge.

By the end, you’ll be able to take advantage of these new developments and use these futuristic databases in your next project.

Let's dive in.

How is data changing?

The role of data in day-to-day business operations has fundamentally changed in the past decade.

They say that data is the new oil. Organizations today store and process more information than at any point in history. This has brought about a huge range of benefits - but it also introduced new challenges.

Specifically, we need secure, performant solutions for storing, accessing, and using large, diverse data sets. We must also retain data quality, accuracy, and integrity while empowering business users with the insights they need.

This has led to a boom in new approaches to storing and handling data.

Join 300,000 teams running operations on Budibase

Get started for free8 data management trends

Today, we’re checking out eight data management trends in 2023 - that are increasingly dominating the landscape. We’ll also check out some examples of each - totaling eleven new and exciting platforms.

Without further ado, let’s dive in.

1. Serverless databases

So first up we have serverless databases.

Now serverless is not a new concept - But it is something that has been getting more and more popular.

Despite the name, it doesn't mean no servers. What it means is that someone else is responsible for running, managing, and maintaining the infrastructure that your cloud databases are built on top of.

That makes it easier to get started. You don’t have to set up the infrastructure or work through the technical details of getting everything set up. You can just get your data up and running.

It's often more cost-effective because you pay for what you're using.

And generally, it's a scalable solution. It's easier to get more databases spun up. You ask for them and the serverless database provider gives them to you.

There are two companies we’d like to highlight that are taking those 70s-style databases and making them available in a serverless way.

PlanetScale

First off, we've got PlanetScale.

It provides MySQL in a serverless way. We get a great dashboard, to monitor our traffic and see how things are going. We also get highly optimized and cached queries, ideal for a huge range of data processes.

In terms of pricing, there's a generous free tier. As our needs for more reliability and more deployments to other areas increase, then we can increase our spending.

PlanetScale also offers separate production and development databases at the click of a button.

SupaBase

Postgres is another database that has been around for a long time. Superbase is making that available in a serverless way.

If you’ve used Firebase, Superbase is a great alternative that allows us to manage our data serverlessly. It manages authentication, and we can get set up with edge functions. That is, functions that are applied to our data from the edge.

Again, there's a generous free tier for when you're getting started and pricing increases based on your usage - and your availability needs, keeping your database warm and ready as things go on.

2. Cloud-native databases

The next trend we want to think about is cloud-native databases. These don’t just run in the cloud - but are built for the cloud from the ground up.

That tends to mean they're more resilient, they self-heal better, and they may take advantage of distributed processing in ways that other databases don't.

Like serverless databases, these can be scaled up as the need arises. And - things like backups, updates, and scaling can all be automated. These databases have been designed with the cloud in mind, so they take full advantage of those features from the ground up.

FaunaDB

FaunaDB is a database that was built with the cloud in mind. It's fast and it's reliable. Fauna offers a TypeScript-inspired developer experience.

You can set types - and the database enforces that schema on the documents that you store. This also creates a powerful way to query your data and retrieve what you want in the way you want it - especially in the context of big data analytics.

It looks like TypeScript. So if you're coming from TypeScript or JavaScript, this will feel really familiar.

As well as being cloud-native, Fauna is the first document database that we've looked at - a type of NoSQL.

That means as our data changes shape over time, or if we have unstructured data - like logs or IoT device feedback - we can accept that data in its source form and process it after the fact.

FaunaDB offers a pro plan that you've got free access to when you sign up for 30 days.

More advanced plans come with things like logging, unlimited developers, unlimited databases, big three per throughputs, multi-region support, and more.

There’s also a free plan. This is mostly designed for people who are learning Fauna. So - it imposes quite heavy limits on what you might need, but you can remove those limits for as little as $25 a month to be able to explore and scale your use case.

3. Branching

Next up, databases offering branching. If you've used Git before, making branches and merging back to main will be a very familiar workflow.

Now there are databases that allow you to do the same thing. We can check out new branches which will take a snapshot of the data and structure at that point in time.

Then, we can make changes to that structure and merge it back into production - once we're happy that the new data structure is something that we want to implement.

NeonDB

One of the companies that introduced us to branching is Neon. Neon is a serverless Postgres database, much like Superbase.

Crucially though, it also provides branching capabilities.

On the free tier, you can have up to ten branches. You can create new branches from the dashboard - or you can have them automatically generated based on events, like a git push, a new feature branch, or other triggers.

Neon acts just like a normal Postgres database, so we're able to interact with it in much the same way as we’re used to - just with this added advantage of branching.

In terms of pricing, NeonDB also offers a generous free tier, with one project, 10 branches, and 3GB of storage.

Pricing is based on a range of metrics to scale based on what you use.

So if you have customers who are using your service a lot, you're going to be paying more. If your service is not being used or just in development, you're not going to be paying anything - or not very much.

You can branch with just a single click through the API, by deploying and then reintegrating.

You can also create a preview version of the database for every PR. This allows for really good isolation of data with large teams who are collaborating across multiple features simultaneously.

4. Multi-model databases

Next up, multi-model databases. These are databases that are designed to integrate different types of data through a single backend. This means they can accommodate data in different types - such as relational, nonrelational, or graph data.

This empowers developers to make the choice that they need for their use case, while not isolating that data from the rest of the application or ecosystem.

The name of the game here is versatility. Multi-model data gives more flexibility to developers.

This also allows for improved and highly targeted efficiency. There are times when you're developing a feature that would work better in a relational database, even though the rest of your application is built with a document or NoSQL database, and vice versa.

And because the data is being integrated through a single back end, we can also achieve high levels of consistency.

SurealDB

SurealDB bills itself as the ultimate multi-model database. It provides a query language that works like traditional SQL but can be applied against graphs and time series data.

It has incredible query performance and manages to enable machine learning, alongside other exciting features.

At the moment, they don't offer a hosted experience for their database. Instead, you need to spin up a machine to be able to run this yourself. So it requires some more DevOps knowledge to use.

But the efficiency and flexibility may be worth it for you.

Couchbase Capella

Couchbase Capella is another database that's built with multi-model data in mind.

It allows you to consolidate multiple data technologies into one architecture. Everything is ingested into Couchbase, and then it's queryable by their API.

Ultimately, Capella is a NoSQL document-based database, so the data structure tends to be human-readable like standard JSON. You can also query data using SQL-like syntax - including, select statements with joins.

If you're coming from a SQL background and you want to dip your toes into NoSQL, Capella can be a really good middle ground as you decide which way to go.

There’s no free tier but you can set up Couchbase in a range of cloud platforms on your own, rather than having them manage the infrastructure - enabling us to air-gap our data.

There’s also a managed option. Couchbase bills by time rather than usage because the nodes are always up, always have access to the data, and are always processing that data.

5. Graph databases

Next up are graph databases. These are NoSQL databases where the relationships between the data are seen as important as the data themselves. They're particularly useful when we're thinking about highly connected data like social networks or supply chains.

Graphs are also used for recommendation engines or fraud detection.

There are three core components.

- There's the node, which represents the particular entities - like a person or product.

- There are the edges, which are how the different nodes are connected.

- And there are key-value pairs called properties that exist on either the nodes or the edges.

Graph databases are highly efficient when we're trying to explore relationships.

They're quite flexible. It's easy to add new relationships and nodes. They're also quite intuitive because it's how the real world tends to work in terms of how we think about things in relation to one another.

As the dataset grows, graphs stay performant in terms of querying. They don't require joins because those relationships are already embedded in the database.

Memgraph

One very common implementation for graph databases is Neo4j, but Memgraph presents itself as a more performant, faster implementation of Neo4j.

You can run Memgraph on Docker, on your local infrastructure, on Community Edition, for free. You can scale to billions of nodes and edges and stay really performant.

We're also able to use any graph that you might have implemented already with Neo4j, which you can bring with you into Memgraph relatively easily.

In terms of pricing, the Community Edition is free. There's an Enterprise Edition which includes things like auth, role-based access control, and full activity auditing - for advanced data security.

Memgraph has also recently released a cloud beta version with support for multi-regions, automated updates, and managed backups.

Pricing depends on how big you want your instance to be. If you're running it in the cloud, one instance of one gigabyte starts at $25 per month.

Check out our other tutorial on building a SQL GUI.

6. Time-series databases

Another trend in databases is time series databases. These are databases where every data point has a timestamp. Time series data might be measurements or events that are tracked and aggregated over time.

We'd have things like temperature, stock price, CPE usage, and other real-world phenomena.

Time series databases need to be optimized for high write volume. So, because we're expecting to write to this database pretty continuously, processing those writes quickly and efficiently is going to be critical.

We also need capabilities around aggregating data - like computing sums and averages over defined time periods to be able to garner insights.

Generally, there will also be defined retention policies. You might have so much data that you'll think about how long you want to keep it.

Time series need to be highly scalable. These databases tend to scale horizontally to handle growing data size. Often they also feature advanced compression - given the high volumes of data we might store.

Some time series databases work with specialized query languages - while others support SQL-like querying.

InfluxDB

InfluxDB is a great example of a time series database.

It's single-purpose - and it runs at scale in the cloud, on-premises, or at the edge. So you can run this serverlessly or on your own infrastructure.

Influx also natively supports SQL, alongside a range of other methods to interrogate your data.

For instance, InfluxDB also comes with SDKs for pretty much every language that you can think of - including JavaScript, Python, Go, C Sharp, and Java.

In terms of pricing, we can, you can start for free.

This gives us credits for their fully managed, elastic, multi-tenant service. You only pay for what you use when you exceed these credits.

Helpfully, Influx also offers a single-click install to get up and running on the self-hosted plans.

You might also like our guide to digital transformation platforms.

7. Embedding AI in databases

The next trend we want to talk about is embedding AI into databases. Embedding AI brings really powerful data management and analysis tools directly into our database.

As database administrators and developers, we're able to leverage AI and machine learning in situ. For example, leveraging AI models using SQL or to enhance and enrich our data using machine learning.

There are lots of benefits in doing this directly inside of the database.

First of all, it's easier to use - with the same query language we’re already familiar with - so we can leverage AI without learning any new technologies.

For one thing, it can be more efficient, performant, and secure. We're not moving data from our database to another system for processing.

It's happening in situ, which means that we're reducing costs and lowering the risks for various attacks and data breaches.

MindsDB

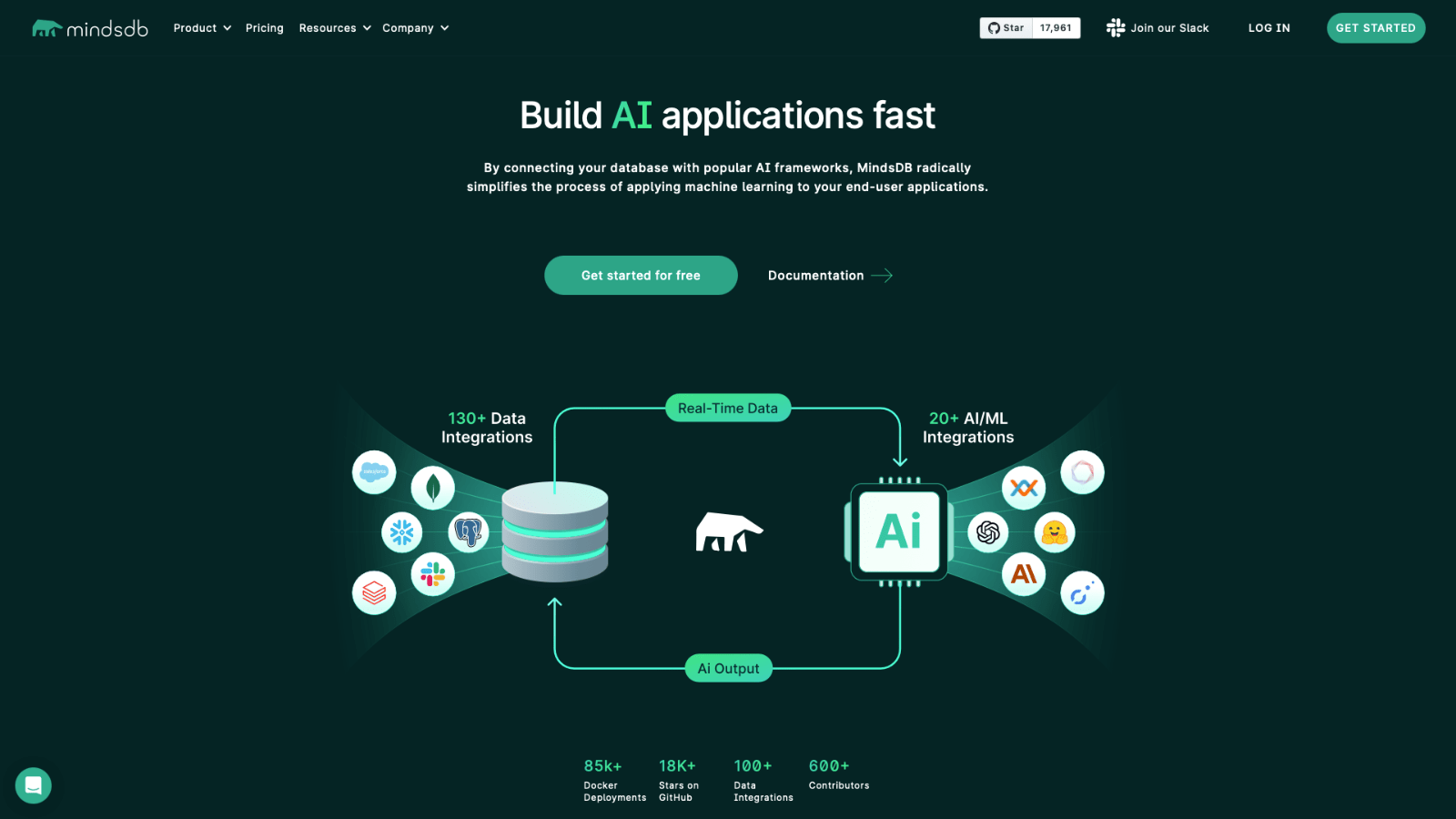

MindsDB is an AI-first database. It integrates with lots of different data sources like Slack or Shopify, Postgres, and more. It also ingests real-time data and can apply advanced machine learning and AI to our stored data.

And then can enrich that data again, and that can loop around so we for incredibly powerful functionality. For example, you might use Minds to build your own custom chatbot or large language model.

Or, it could be used to build fraud or anomaly detection. If you are thinking about building an application using AI, then doing that at the database layer provides countless exciting opportunities.

In terms of pricing, there's a demo tier where you can just get up and running and have a look at it for free. There's also an open-source version that you can deploy yourself in one click one click.

So - MindsDB fits several of the trends that we've talked about over the course of this guide.

We have multi-model, cloud-first infrastructure and we're adding in these extra AI components to make it an incredibly powerful way to build performant, AI-based applications.

Join 300,000 teams running operations on Budibase

Get started for free8. Databases & low-code development

At BudiBase, we empower teams to turn your data into action.

Wherever your business data lives, we are on a mission to help teams build professional, performant internal tools at pace.

We offer dedicated connectors to build UIs and processes on top of Postgres, MySQL, MSSQL, Couch, Mongo, Arango, S3, Oracle, Google Sheets, REST API, and many more - alongside support for custom data sources.

If you want to build an application and don't have a data source set up yet, we even have our own internal database. BudibaseDB offers a fast, intuitive way to build a data model from scratch - including support for relationships and JavaScript formula variables.

Budibase saves businesses thousands in development costs. Check out our features overview to learn more.